Applying Chain-of-Thought to AI-enhanced human thinking

Among the most important recent innovations for improving the value and reliability of Large Language Models are Chain-of-Thought and its derivatives including Tree-of-Thought and Graph-of-Thought.

These structures are also extremely valuable in designing effective Humans + AI workflows for better thinking.

In this article I’ll provide a high-level view of Chain-of-Thought and then look at applications to AI-augmented human intelligence.

Chain-of-Thought

Large Language Models (LLMs) are generally excellent at text generation, but poor at any tasks that involve sequential reasoning.

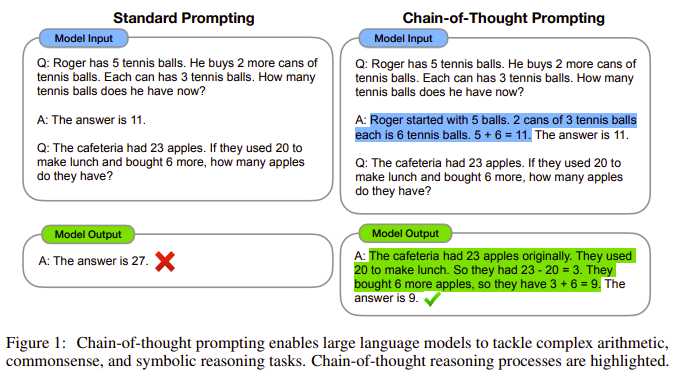

The landmark January 2022 paper Chain-of-Thought Prompting Elicits Reasoning in Large Language Models laid out how a chain of thought — “a series of intermediate reasoning steps” — could substantially improve LLM performance at reasoning tasks including maths and commonsense puzzles.

You have likely seen this image from the paper doing the rounds.

This concept was quickly adapted to other applications including temporal reasoning, visual language models, retrieval augmented reasoning, and many other ways of improving te performance of AI models.

Chain-of-thought has proved particularly valuable in practical problem-solving applications. Obvious examples include medicine, law, and education.

Google’s PaLM and Med-PaLM incorporate chain-of-thought structures and OpenAI’s GPT-4 very likely does, meaning when you use an LLM these approaches are already built in.

Even so, famously the prompt “Let’s work this out in a step by step way to be sure we have the right answer” or variations on this give the best LLM performance for many kinds of tasks.

Evolution of Chain-of-Thought

A number of innovations have emerged by building on Chain-of-Thought.

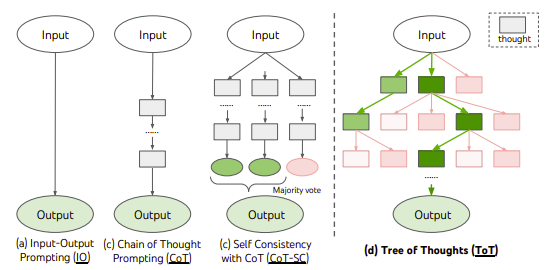

Effective reasoning processes do not necessarily follow a single trajectory. This leads to Tree-of-Thought structures, described in Tree of Thoughts: Deliberate Problem Solving with Large Language Models.

As shown in this diagram from the paper, Chain-of-Thought can progress first to selecting the most frequent path from multiple outputs, and then selecting from the best of multiple paths through the thinking process.

More recent developments on Chain-of-Thought include the very promising Graph-of-Thought as well as Hypergraph-of-Thought.

Novel ‘thinking’ structures will be central to generative AI progress

Chain-of-Thought and related techniques were created to address the limitations of LLMs and enhance their capabilities.

The continued advance of generative AI models will rely far more on these kinds of structured thinking techniques than compute capacity or model size. These approaches have already enabled small, efficient LLMs to achieve performance which can approach that of the largest models.

Chain-of-Thought and similar models also lead directly to multi-agent chains, in which chains or networks of thought are laid out across multiple task-optimized models to create far superior reasoning and outcomes than can be achieved within a single model.

Augmented intelligence is more important than Artificial General Intelligence

“Technology should not aim to replace humans, rather amplify human capabilities.” — Doug Engelbart

The driving force behind almost all AI development seems to be to create machines that can emulate and potentially exceed human intelligence and capabilities.

That is an understandable ambition.

But I am far, far more interested in how AI can augment human intelligence.

We can work on both domains at once.

But in every possible scenario for progess towards Artificial General Intelligence, we will be better off if we have put at least equal energy into building, learning, and applying Human + AI thinking structures.

Humans + AI Thinking Workflows

The concept of Humans + AI is at the heart of my work.

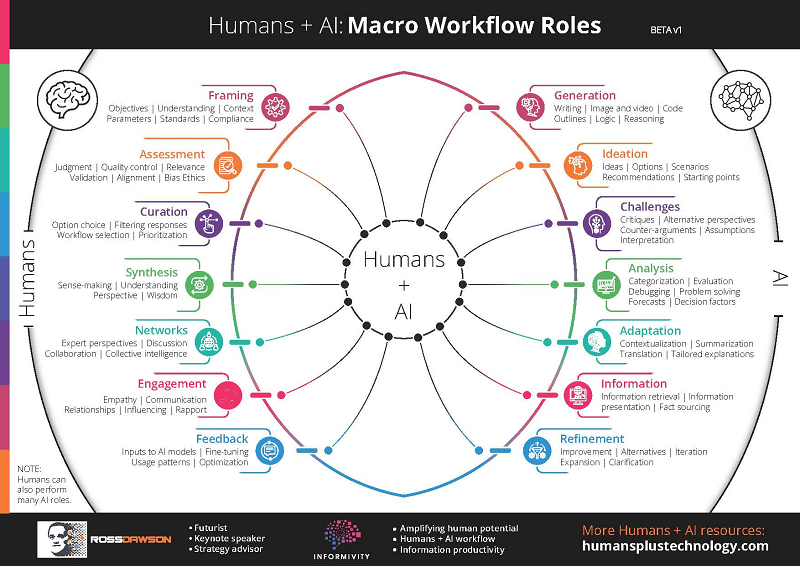

The framework below I created a year ago shows my early framing of “Humans + AI workflows“, in which people and AI sequentially address the tasks to which they are best suited.

If well-designed, this inevitably generates outcomes superior to what each could alone.

Since then I have been digging in far more detail into what specifically are the best Humans + AI thinking structures.

These will be the foundations of the next phase of augmented human intelligence.

Chain-of-Thought for AI-Enhanced Human Thinking

The concepts flowing from Chain-of-Thought were developed to enhance the stand-alone capabilities of LLMs.

However they also prove to be immensely valuable in maximizing the value of humans and AI working together.

There are a range of techniques for applying Chain-of-Thought structures to Humans + AI thinking workflows.

AI concepts applied to augmented intelligence

LLMs can be used to suggest how tasks can be decomposed into sequential (or networked) elements, with either humans or AI identifying where human or AI capabilities may be best suited.

One specific approach is described in Human-in-the-Loop through Chain-of-Thought, in which “manual correction of sub-logics in rationales can improve LLM’s reasoning performance.”

“Framing” the objectives, task, and structure, as shown in the Humans + AI workflow diagram, drives the quality of outcomes. This is usually best overseen by humans, using flows such as AI proposing or assessing parameters.

I am incorporating these and other approaches into a set of “AI-Enhanced Thinking Patterns”.

More generally, a wide variety of AI advances, not just Chain-of-Thought, can be extremely usefully applied to augmenting human intelligence.

I intend to write a similar article about applying the concepts of Generative Adversarial Networks to Human-AI symbiotic intelligence structures.

Course on AI-Enhanced Thinking & Decision-Making

My complete focus in 2024 is how AI can augment humans.

One of my central activities is running a regular cohort course on Maven: AI-Enhanced Thinking & Decision-Making. Check out the link for more details.

The next cohort starts February 8. As a thank you for reading through to the end of this article, you can get a 30% discount by using the coupon: COTARTICLE 🙂.